Fifteen dissection part two: Instruments

[Previous instalments: part zero, part one.]

So we need to calculate PCM audio, and we have very little space to do it in. First we need some instruments.

I wrote a tool to play around with generating sounds algorithmically. (I've tidied it up a bit since the compo; here is the original for the curious. Particularly of note is the fact that sample values for both the old version and the entry itself were in the range -32768..32767, whereas now they're -1..1. Hence the code samples differ slightly.)

In the tune we have the following: lead, bass, bass drum, hihat, snare, two bongoids and two thud-type things.1

Notes

Melodic instruments can be pretty simple: just take a periodic waveform and decay it exponentially over time.

We start with a sine wave, which is pretty periodic.

var freq = 440;

return Math.sin(i * freq * 2*Math.PI / 32000);

And add some decay:

var freq = 440, decay = 0.0004;

return Math.sin(i * freq * 2*Math.PI / 32000) * Math.exp(-i*decay);

Sine waves sound really "thin", having just a single frequency. We need harmonics. A cheap way of doing this I discovered was to generate sinnx instead of sin x: higher values of n give more harmonics. Here's n=9, the value used for the lead melody:

var freq = 440, decay = 0.0004, exponent = 9;

return Math.pow(Math.sin(i * freq * 2*Math.PI / 32000), exponent) * Math.exp(-i*decay);

Frequency isn't too useful by itself; instead we need pitches in more useful units such as semitones. The actual function I ended up with was:

function r(o,p,f,e) {

return 4000*Math.pow(Math.sin(o*Math.pow(2,(p-53)/12)),e) * Math.exp(-o * f);

}

Here o is the offset in samples from the start of the note, p is the pitch in semitones (adjusted so that useful values are just above zero), f is the decay constant, and e is the exponent. This generate sample values in the range -4000..4000, which will fit about 8 such notes in the 16-bit output range.

And the calling code:

z += (t||(l==3))?

(r(u, p[l], 3e-4,25) + 2*r(u, p[l]-24, 2e-4, 80)):

r(u, p[l]-10?p[l]:13+((i/960)&2), 4e-4,9);

This takes some breaking down.

(t||(l==3))

This determines whether we want the bass sound (when channel == 3, or always during the thuddy chords) or the lead sound.

(r(u, p[l], 3e-4,25) + 2*r(u, p[l]-24, 2e-4, 80))

The bass sound is made up of two calls to r, one with exponent of 25, and one twice as loud and two octaves (24 semitones) lower with an exponent of 80. When the exponent is even the fundamental frequency is doubled so it's actually only one octave lower. The decay constants are slightly different so that the timbre changes over time.

r(u, p[l]-10?p[l]:13+((i/960)&2), 4e-4,9);

This is the melody sound, previously dissected.

p[l]-10?p[l]:13+((i/960)&2)

A hack for the

mordent. p[l] is the pitch stored in the pattern data, which is updated every event, or 3840 samples. If the pitch is not equal to 10, then it is used directly. Pitch 10—a value not needed for the tune—is used as a sentinel to mean "pitch 13 for half an event, then pitch 15 for half an event".

Noises

The bass drum is simply a sine wave with descending frequency:

return Math.sin(2e5/(i+1948));

The number 1948 was chosen so that after 3840 samples (one event) the result will be approximately zero to avoid clicking.

The remaining percussion (hi-hat and snare—I had to jettison the bongoids for space reasons) were something resembling white noise with exponential decay. I decided to avoid Math.random() in favour of some deterministic bit-twiddling.

Here's the snare:

return ((8e10/(i+6e3))&14)*Math.exp(-i/900)/14;

The hat has three different variants with slightly different decay constants.

Here's the longest:

return ((8e10/(i+3e5)*(i*17|5)&511))*Math.exp(-i/1000)/500;

The percussion sequences are simple enough to be hard-coded. The hi-hats in context:

var o = i%3840;

var n = (i/3840) |0;

return ((8e10/(o+3e5)*(o*17|5)&511)) * Math.exp(-o*(n%3+1)/1000)/500;

And finally the percussion in its entirety:

var z = 0;

var m = ((i / 3840 / 15) | 0) + 9;

var o = i%3840;

var n = (i/3840) |0;

if(m<20) {

/* hat */

if(m>9)

z += ((8e10/(o+3e5)*(o*17|5)&511)) *

Math.exp(-o*(n%3+1)/1000) * 8;

/* bd, snare */

z += [m>11 && Math.sin(2e5/(o+1948))*10,0,m>15 && ((8e10/(o+6e3))&14)

* Math.exp(-o/900),0][n&3] * 800;

}

return z / 32768;

So there you have it. Despite all of this, the instruments were easily the weakest part of the demo.

Still to come: pattern encoding.

[1] One of the fun things about making music electronically is that it's entirely possible to get by without knowing what your instruments are conventionally called, or whether they even have a physical counterpart at all.

Crystal Maze theme 1

I've been getting my nostalgia on recently by watching The Crystal Maze. My main takeaway—other than that surely sliding block puzzles aren't that hard?—is that the theme tune badly needs remixing. But before I embarked on such a project, I was aware of a computer adaptation to the Archimedes, a platform with which I'm deeply familiar. I'd played the game (well, the demo) back in the day: I even still had a copy, although it had suffered bitrot and would crash on startup. It presumably contained the theme tune. Now I just had to extract it.

It's been many years since I did any Acorn hacking, but this turned out to be remarkably straightforward. The presence of TrackerModule in the game's Modules directory was a dead giveaway. TrackerModule can play precisely three file formats: Soundtracker, Protracker, and its native format, the almost-lost-to-history Archimedes Tracker. Soundtracker doesn't have any magic numbers to speak of, but even in 1993 nobody used such an obsolete format. Happily the others do: in particular, Archimedes Tracker files start with the string "MUSX", and lo, three data files contain that string. Not quite that easy though, as they're embedded in some kind of custom archive format that I'm not about to reverse engineer.1

Instead I turned to the game itself. Will it unpack the tune for me? I found a pristine copy of the demo—pristine enough to get to the title screen before crashing anyway—and listened for the first time in about two decades to its remarkably poor quality rendition of a fragment of the theme tune. Hardly worth ripping, but no point leaving a job half done. It turned out the crashing was actually an asset: the tune would be left in memory, I didn't even have to break out a debugger! The game normally cleaned up after itself, but that was easily remedied.2

So, the game has quit, and TrackerModule is still loaded. *PlayStatus? Address exception. Well, of course, the tune was in application workspace. Retry outside of desktop, *Modules gives me the workspace address, *PlayStatus gives me the length (also "Converted from Amiga" *sigh*), save it out and we're done.

Since nothing can read Archimedes Tracker these days3, since it started off as an Amiga format anyway, and since I just happen, once upon a time, to have written a converter, I converted the tune back to Protracker. So here it is in all its non-glory. The main executable contains the string "Thanks to Mark Vanstone for the tracker music"—so now we know.

I wonder what the other tunes were.

[1] I'm also struck by how much data compression has come along since the early nineties. These days no compressor I can imagine would leave recognizable ASCII strings behind: what a waste of entropy!

[2] RISC OS's command line interpreter (and its predecessor on the BBC micro) is, at least in my experience, unique in using a single vertical bar as a comment character.

It's about time

{blog.,}sphere.chronosempire.org.uk now has SSL (indeed, TLS) enabled.1 After the last mishap, this time everything went smoooth.

I've stopped short of redirecting HTTP requests to the HTTPS version, but I have enabled HSTS, so if you visit the above domains with HTTPS once, your browser will remember to always use encrypted connections in the future regardless of what protocol you request. This is a Good Thing™.

While I often post links to or include resources from sphere on this blog, happily I had the foresight to not use protocol-specific URLs.2 Thus even cross-domain resources will be fetched using whatever protocol the originating request used, for maximum compatibility, security and avoidance of tedious warnings and broken padlocks. This doesn't help with embedding resources from domains not under my control of course—I can't require that other sites support encryption—but that's a fool's errand anyway.

[1] Also fooble.org.

[2] The syntax for this is simply "//domain/path", e.g. <a href="//sphere.chronosempire.org.uk/~HEx/">my home page</a>. This trick ought to be more widely known.

Getting familiar with MIDI

So I recently came into possession of a Yamaha P-80 digital piano. Now that my MIDI cable has finally arrived I've been toying with controlling it by computer. MIDI is a terrible protocol, with no concept of plug-and-play whatsoever. And since the P-80 has a very limited set of instruments, with only a token attempt at mapping them onto General MIDI, any software trying to control it needs to have a preset specifically tailored for the P-80.

The program I tried using, Rosegarden, did not. So I made one: p80.rgd. (I haven't played much with the controllers but all the instruments are present and correct.)

Here's a MIDI file I used for testing, and here's how it sounds in FluidSynth with the Fluid-R3-GM soundfont1:

Here's a tweaked version for the P-80, and here's the P-80 rendering it:

The tune is the in-game tune from Spring Weekend, a demo version of which was included on a Windows 98 CD I had many moons ago.2

[1] Well, how the first quarter of it sounds, anyway. For some reason (workaround for poor looping facilities?) the file contains four identical copies of the fragment I recorded.

[2] And while I was googling, I came across Raymond Chen on MEP:TPC.

Dillo: a eulogy

Dillo has been my web browser of choice for a decade now. This tends to provoke either blank stares or sniggers depending on whether I've previously told whoever I'm talking to about it.1

Dillo is not a browser you would give to Mum and Dad.2 Dillo does not sing and dance and run arbitrary code. Dillo is not Web 2.0. Dillo does not have gradients and rounded corners. Dillo has never heard of HTML5. (Dillo has heard of CSS, but will feign amnesia when asked nicely. Site authors do not know my colour and font preferences better than I do.)

Dillo renders HTML 4.01, and will happily quote the sections of the spec your page is violating. Dillo does not send cookies or Referer headers. Dillo does not claim to be Mozilla only to admit later, in the small print, that that's a lie. Dillo identifies itself as “Dillo”.

Dillo has never once shown me a banner ad. Dillo laughs in the face of pages that plead “refresh me every 30 seconds!”. Dillo never hits the network without permission. Dillo keeps every document ever fetched in memory. (Disk cache? That's what swap is for! And other programs get to use it too!)

Dillo does not run on Microsoft Windows. Dillo requires you to edit configuration files using an actual text editor (remember those?). Dillo is, in short, a browser for purists. It does one thing and does it well. Using Dillo as your sole browser would be pure masochism. But it's lean, it's mean, and it keeps going long after bigger browsers would have keeled over under the weight of all the crap the modern web demands of them.

Sadly, your choice of sites is increasingly limited.3 But a fine test of whether a site is worth visiting is whether it is usable in Dillo. As for me, I rarely browse Wikipedia or search Google or read Hacker News using anything else.

And long may it remain that way.

[1] Of course, nobody has ever heard of Dillo before I tell them about it.

[2] That is, unless you're trying to keep them off the internet.

[3] As a proportion of the sites you might want to visit, that is. I doubt the absolute number of dillo-friendly sites is dwindling. Nonetheless, the day I discovered that Google Groups—an interface to an entirely text-based medium, let's not forget—now required javascript for even minimal functionality is the day I knew the web was doomed.

Ranting: in abeyance

I had a rant planned, but just as I was getting nicely worked up and frothy I happened to read this. And, well, I know when I'm beat.

That is all.

Music reverse-engineering, or, how to pretend that SIDs are mods

One of the goals of the Fooble project is to make music more hacker-friendly; in particular, to expose the internal workings of music whose construction was previously opaque.

The most common way for music to be distributed is as recordings. But reverse-engineering music from a recording is hard. (It's hard for humans. Getting machines to do it is even harder.) Luckily, there are many music formats intermediate in structure between a raw waveform (which is non-trivial to extract information from) and human-editable formats such as Protracker and MIDI (which already have all the information present in a usable form).

One obvious example is the Commodore 64's SID format. As a file format, SID long postdates the C64 itself. It consists of a header followed by 6502 machine code: when executed in the correct environment, this code writes to the memory-mapped I/O registers that control the SID chip itself. Can we turn this into something resembling readable pattern data? Presumably the patterns are stored in the code somehow. But many different playroutines have been written over the years, and given the activity of the C64 demoscene it seems likely that new ones will continue to be written.1 The only reliable way to recover the information we seek is to treat the code as a black box: execute it, watch what it does, and reconstruct what we can.2

So essentially we are faced with a compression problem. We have a large (indeed, potentially infinite) stream of data, of very low entropy, which we would like to turn into a smaller amount of high-entropy data (patterns, instruments, maybe samples). The problems we face are many. There is no indication where pattern boundaries should lie, or how long the tune is. But happily, the SID offers hardware ADSR volume envelopes: if a tune takes advantage of this we can at least identify where notes begin.

Enough waffling. Demo time!

- Bad Scene Poetry (4-Mat, 2002)

- Ghouls 'n' Ghosts (Tim Follin, 1989)

- Comic Bakery (Martin Galway, 1986)

- Driller (Matt Gray, 1987)

- Blues in A minor and other keys (Hein Holt, 2005)

- Stereo of '11 (Conrad, 2011)

(Enterprising readers with a copy of HVSC should have little problem figuring out how to try their favourite tunes.)

The current code lacks many niceties. No cycle counting is done. The CPU is assumed to be infinitely fast, thus events occur precisely at interrupts. Tunes are assumed to set the interrupt frequency only at initialization time: this sets the tempo for whole tune. Only PSIDs are loaded. A trivial environment is provided that doesn't resemble the C64 much at all. (Many of these problems can be solved at a stroke by using a real C64 emulator. Happily, a javascript port of VICE already exists.)

Also, because modplayjs is currently completely sample-based, much of the SID chip is unemulated. In particular, no filters or ringmod. Variable pulse width is done ickily, by switching between samples. There may be envelope bugs. The problem of finding pattern boundaries has yet to be tackled.

Still, there is good news. Most SIDs play recognizably. Many play reasonably well, modulo the lack of filters. And some simple SIDs have extracted pattern data that resembles what a human would produce. To the best of my knowledge, this is not an approach that anyone has tried before. But as far as I'm concerned, it's certainly a step in the right direction.

[1] It also seems likely that demosceners' playroutines will continue to tend towards the completely undecipherable. Yes, that is 373 bytes of code. (And no, it doesn't render well in modplayjs. Yet.)

[2] That's not to say we can't peek at the code. But it won't necessarily yield good results. In particular, one not-quite-black-box technique I've had only limited success with is to detect looping of the tune by checksumming the state of the emulated machine at each frame. Since the state completely determines future states, matching checksums means a guaranteed loop. This can even be done in constant space.

Fifteen dissection part one: Audio in a browser

[Part zero is here].

Phase 1 of making a music demo in javascript is to work out how to play js-generated PCM audio in a browser. There are two widely available APIs for synthesizing audio in real time, namely the Firefox-specific MozAudio and the much more heavyweight Web Audio (Chrome, Safari). Opera supports neither. Even neglecting Opera, supporting both would require separate code for each, which is a Really Bad Idea when the space constraints are this tight.1

So real time is out. It's possible to put a base64-encoded WAV in a data: URI and pass that to an audio element. This has been fairly widely exploited by this point, and works just about everywhere. http://js1k.com/2013-spring/demo/1388 is a good example of this at work. Neglecting the quantization noise that comes from using 8-bit samples, the main problem here is that notes are triggered using setInterval, which is not a precise timing method, and at least on my setup it sounds very juddery.

Which leaves the final option: generating the entire tune as a single WAV. This has its own problems: there's a delay at startup while megabytes of data are precalculated, the tune can't loop indefinitely (unless you use setInterval again, and that won't be seamless), and memory usage for storing the data: URI is quite high. (Some browsers (*cough* IE) place restrictions on the size of data: URIs too.) Still, it's the best we can do.

I settled on mono 16-bit 32kHz audio for a data rate of 64KiB/sec. (8-bit audio sounds terrible; see above.) Delightfully, browsers offer the ancient and arcane btoa() method for base64 encoding, which at 6 bytes can't be beat. Then

new Audio('data:audio/wav;base64,"+btoa(header+pcmdata)).play(); will make noises.

The data chunk is built up by iterating the following a few million times (z is a number in the range -32768..32767; the bitwise ops force integer conversion): pcmdata += String.fromCharCode(z&255,(z>>8)&255);2

Here is the WAV header:

00000000 52 49 46 46 24 00 00 01 57 41 56 45 66 6d 74 20 |RIFF$...WAVEfmt | 00000010 10 00 00 00 01 00 01 00 00 7d 00 00 00 00 00 00 |.........}......| 00000020 02 00 10 00 64 61 74 61 3a 61 75 64 |....data:aud |

To save space the header is stored as a raw string rather than base64-encoded, so we can't use any byte values greater than 0x7f as UTF-8 bloat would more than offset any gains from avoiding base64. Hence 32kHz (0x7d00), which is the highest common rate that is less than 32768. The lengths 0x01000024 and 0x01000000 are simply "sufficiently large" and wildly inaccurate. Similarly the four bytes after "data" are the data length, but since browsers don't check this, we reuse part of the "data:audio/wav" string to increase compression. (It's just as well there's no space to include an <audio> element as the seek bar would get very confused.)

Finally, I discovered at the last minute that submitting entries containing null bytes doesn't work. Sadly there was no time to do anything other than replace them with \0.

Next up: the calculation of those few million z values.

[1] Good news! In the six months since the contest ended, both Firefox and Opera are now shipping with Web Audio. So things will be different next year.

[2] String.fromCharCode? That's 19 bytes. Nineteen! For shame, javascript, for shame. Perl manages with three, and the parens are optional.

SSL (mis)adventures

So I've been meaning to set up SSL on here for a while now—the web being unencrypted by default these days is just silly—and reading this gave me the impetus to give it a try. ($0, you say? Under an hour, you say? Sounds good to me!) My experiences were... frustrating.

Step 1: Register with StartSSL. After I grudgingly gave them all my personal information, I was provided with a client certificate, which my browser (Chromium) promptly rejected. "The server returned an invalid client certificate. Error 502 (net::ERR_NO_PRIVATE_KEY_FOR_CERT)". The end.

Since the auth token they emailed me only worked once, I couldn't try using another browser. So, unsure what to do (and thinking they might appreciate knowing about problems people have using their site, so they can fix them or work around them or even just document them), I fired off an email.

The response I got was less than helpful: "I suggest to simply register again with a Firefox. Make sure that there are no extensions in Firefox that might interfere with the client certificate generation." Gee thanks, I would never have thought of that. And nope, I can't register in Firefox, my email address already has an account associated with it. Perhaps naïvely, I thought StartSSL might frown on people creating multiple accounts (or might like to take the opportunity to purge accounts that will never be used because their owners can't access them), which was why I didn't just create a second account using a different address in the first place. Still, lesson learned, second account created, no problems this time round. Bug fixed for the next person to come along? Not so much.

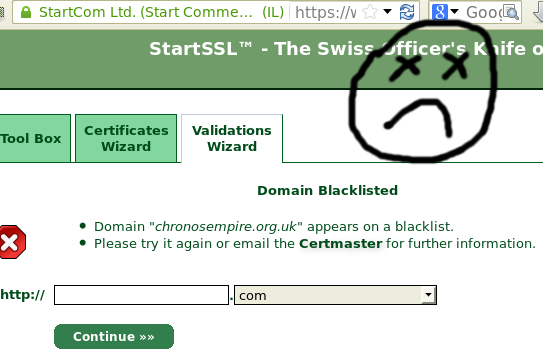

Step 2: Validate my domain. Going into this I was thinking "Hmm, will I need to set up a mail server and MX record so I can prove I can receive mail at my domain? Will the email address WHOIS has suffice? What address does WHOIS have, anyway?"

This was premature. Apparently the domain chronosempire.org.uk is blacklisted. Sadness. Not having any clue why, I fired off another email. Turns out it's Google. Google blacklisted me, claiming

"Part of this site was listed for suspicious activity 9 time(s) over the past 90 days."

This was premature. Apparently the domain chronosempire.org.uk is blacklisted. Sadness. Not having any clue why, I fired off another email. Turns out it's Google. Google blacklisted me, claiming

"Part of this site was listed for suspicious activity 9 time(s) over the past 90 days."

Nine times? WTF, Google?

The reply continued: "Unfortunately we can't take the risks if such a listing appears in the Class 1 level which is mostly automated. We could however validate your domain manually in the Class 2 level if you wish to do so.". I am confused as to what risks there are to StartSSL (I thought they were only verifying my ownership of the domain, which I'm pretty sure is not in doubt), and how those risks would go away if I paid them more than $0 for a Class 2 cert.1

Still, StartSSL is just the messenger here. Google recommends I use Webmaster Tools to find out more, so I dig out my rarely-used Google account, get given an HTML file to put in my wwwroot, let Google verify I've done so, and finally I find out what this is about.

I have a copy of Kazaa Lite in my (publicly-indexed) tmp directory. Apparently some time around June 2004 I needed to send it to someone, and it's been there ever since.2 This should not come as any surprise to anyone who knows of my involvement in giFT-FastTrack, but more to the point, Kazaa Lite is not malware. Not only is it not malware, it not being malware is the entire reason for Kazaa Lite's existence.

Sadly, whether it is or is not malware is irrelevant. "Google has detected harmful code on your site and will display a warning to users when they attempt to visit your pages from Google search results." Nice. So now I have to refrain from putting random executables in my tmp dir in case they make Google hate me? (Total hits for the file in question over the past few months: 14. Hits that weren't Googlebot: zero. In fact, I'm pretty sure not a single actual human has fetched it in the past, say, five years.)

Anyway. A quick dose of pragmatism and chmod later and my site is squeaky-clean! Now I guess I have to wait 90 days for Google to concur. Which is perhaps just as well, as I've already spent substantially more than an hour on this, I've not even started configuring my web server or making a CSR, and my enthusiasm is as low as the number of people desperate for my copy of Kazaa Lite.

[1] Maybe I'm being overly cynical here and they would actually use the money to check... something? What? I have no idea.

[2] I firmly believe in not breaking URLs unnecessarily. That's my story and I'm sticking to it. It has nothing whatsoever to do with me never cleaning up my filesystem.

Fifteen dissection part zero: History

This is part zero of a dissection of my recent JS1K submission Fifteen, a 1K javascript audio demo. In this part: history of the tune.

In August 2004 I wrote an unnamed tune using soundtracker. It got the temporary name "f", because it's in 15/8 time and 15 is 0xf in hex. As was my custom, snapshots got an incrementing version number stuck on the end, and the "final" version was called f4.xm. I never got round to properly naming or distributing it, but it seemed well received by the few friends I showed it to.

Here's the original xm (or rendered in-browser for your convenience).

Fast forward four years to July 2008. My friend Kinetic had just started serious hacking on a project he'd had in mind for a long while, namely modding the Amiga game Lemmings with new levels, graphics and music. Since he liked my tune, I set myself the challenge of squeezing it into the constraints that would allow playback within the game.

Lemmings has a particularly unsophisticated playroutine. Its capabilities are a tiny subset of those of Protracker: the only supported effect is "set volume", although an initial speed can be set. Only three channels are available as the fourth is reserved for sound effects; maximum 15 samples per tune, and no finetunes. In addition, the entire tune had to fit into 47000 bytes―the game ran on a 512K Amiga so memory was tight. Nonetheless, to my (and Kinetic's!) surprise and delight, I succeeded in making something that sounded very much like the original 8-channel tune.

Here's the 3-channel version (browser).1

And here's a video of it playing in Lemmings.

Fast forward another four years. So JS1K came round and I'd been musing over the idea of submitting something audio-related. Since rule number one of optimization is to have a stable starting point, I needed a tune already written. After my previous success, f4.xm seemed worth trying, although I was under no illusions that it would survive such a drastic excision unscathed.

Next up: so how do you squeeze something like this into 1K?

[1] Alert readers might spot that this file is larger than 47000 bytes. The game's internal file format stored a stream of three-byte (note, sample, volume) tuples with RLE of empty events, making the pattern data smaller than Protracker's encoding. Samples were of course uncompressed to allow Paula to suck them directly out of RAM.